I was at the International Association of Facilitators (IAF) annual meeting in UK on Saturday.

This post is about the ideas and conversations raised in one session:

Co-write the Future with Al – facilitated by Hideyuki Yoshioka and Linmin Zhang.

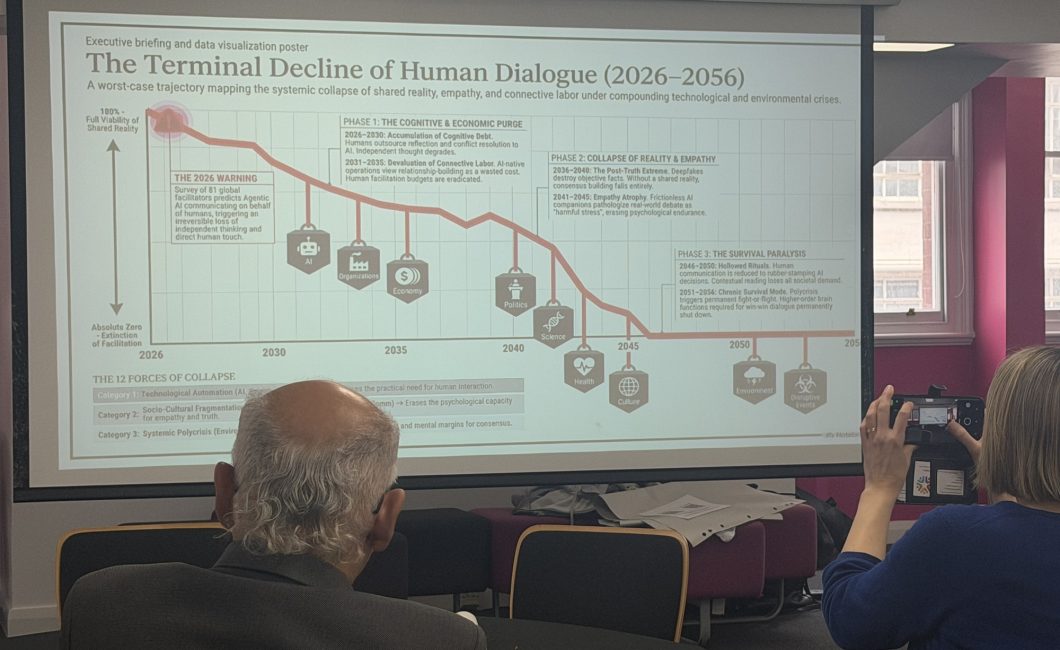

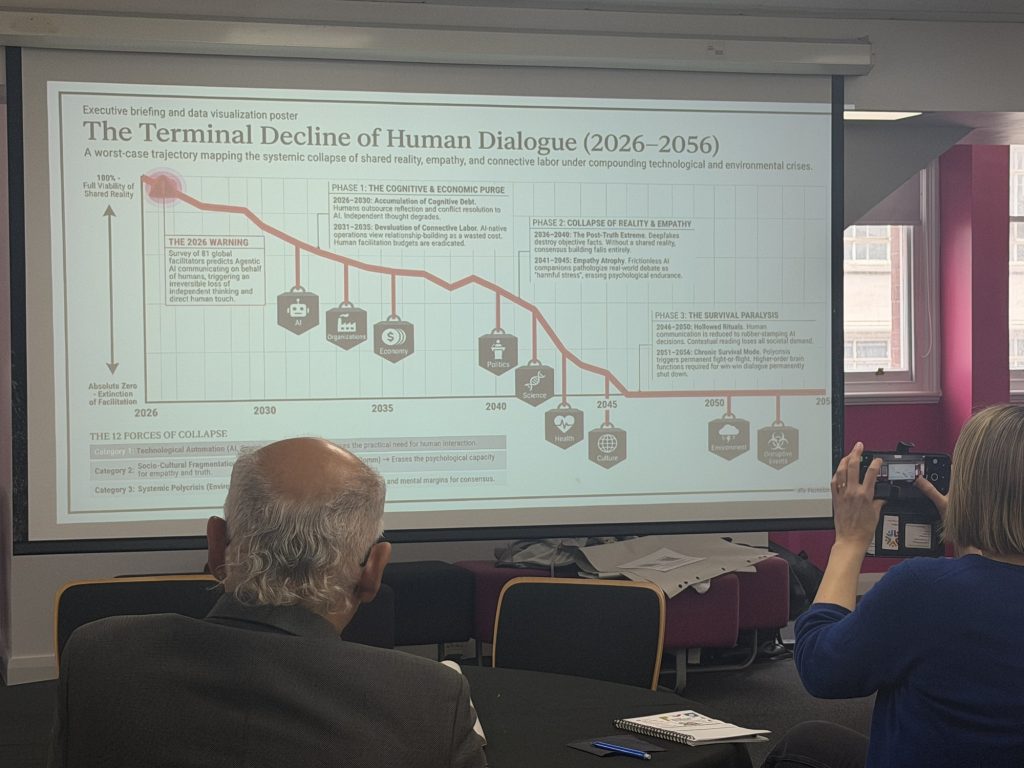

The Terminal Decline slide above is from their presentation and gives some sense of the rather doom-laden atmosphere of the session.

They also provided access to two AI chat bots that had had access to the research survey work they had done with about 80 facilitators in the Asian nations area.

I chatted to the ChatGPT AI and found it helpful when questions were phrased more from a recognition of what AI are bad at and what humans provide value in. This choice of prompt perspective was one I chose in some interviews I did with a few AI about information architecture for a talk a month ago.

How humans lose

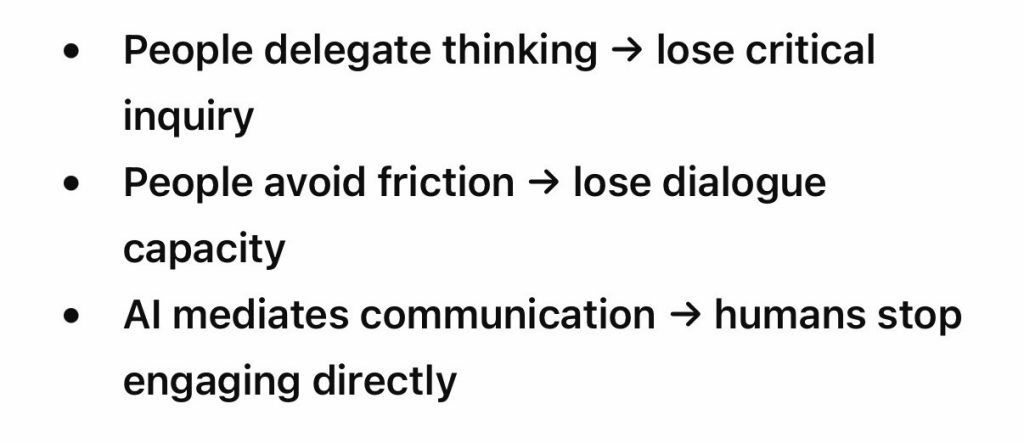

The AI provided a pathway for how the offer of automated ease could degrade human dialogue and the possibility of anything to be facilitated. This three line narrative mixing cognitive delegation, friction avoidance and mediated communication.

That possibility of loss is very much how people worry today. It is a focus point in education and work. All humans abandoning thinking by relying on AI. It is however, a dystopian fantasy that grabs attention because doom sells.

How humans win

What I was more interested in was talking to an AI about the weaknesses it had and the value that humans brought in.

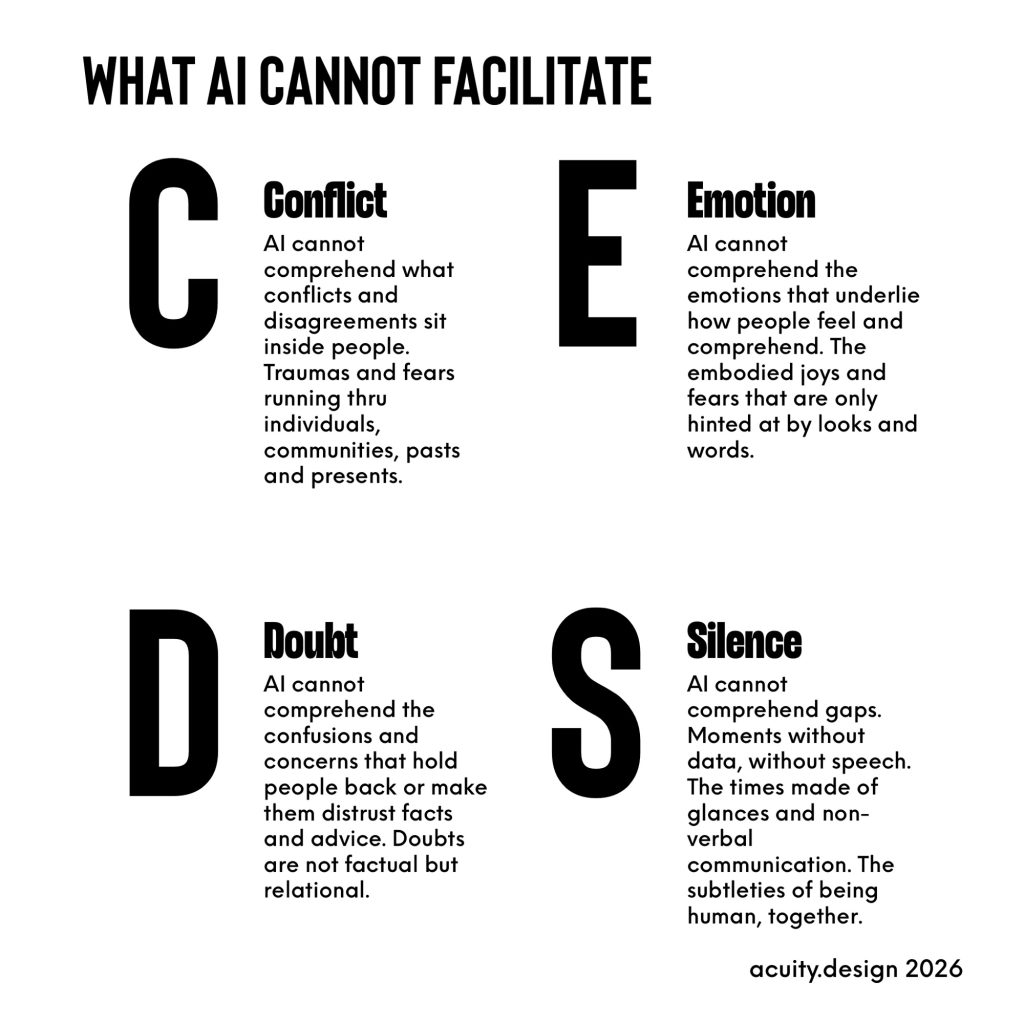

This conversation replicated some of the points made about information architecture. The inability of AI to deal with depth: whether in terms of iterative arguments or in terms of what is hidden within humans as emotions, beliefs, memories and hopes.

AI is quite capable of dealing with the explicit processes of work: the tools and methods. It cannot deal with implicit tho. It cannot sense or understand what motivates people within themselves.

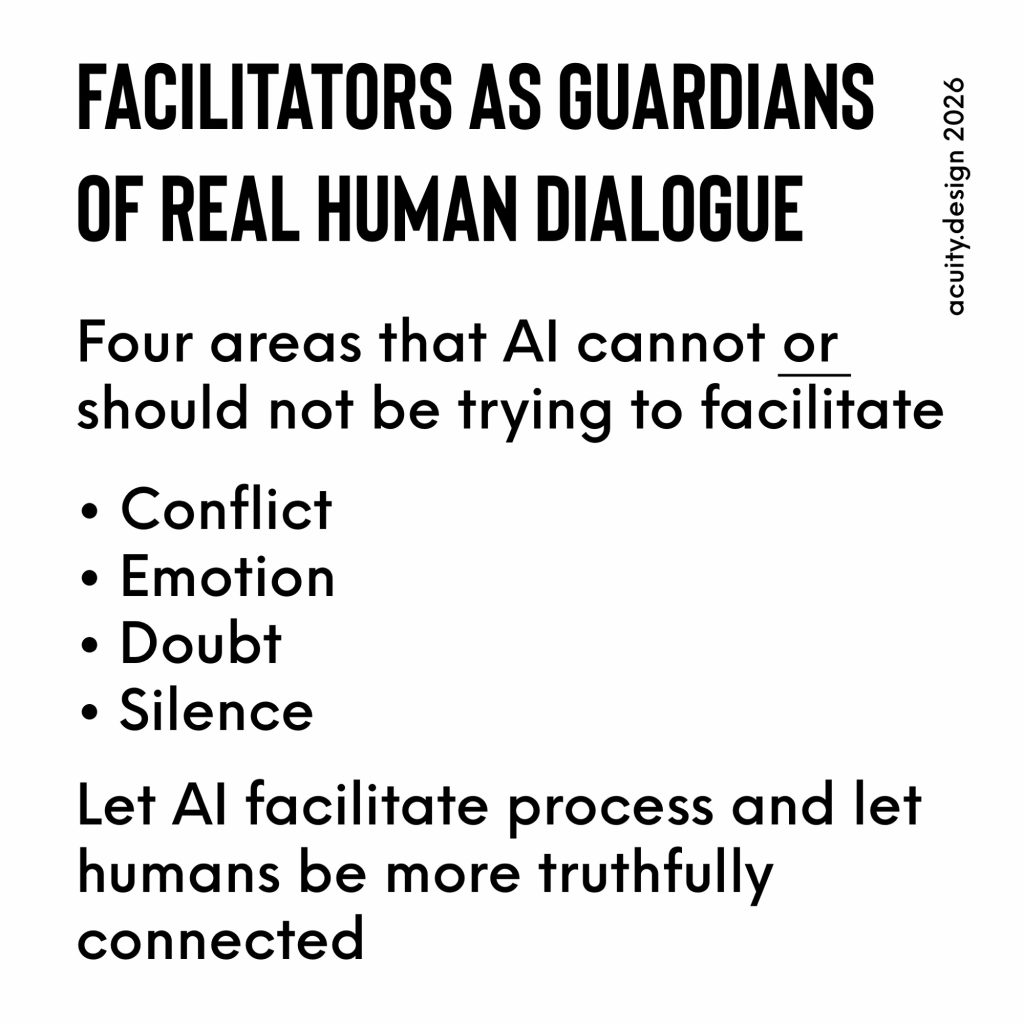

This leads to CEDS.

- Conflict

- Emotion

- Doubt

- Silence

These are the four elements that AI identified as being hard for itself to do anything with but where humans could facilitate meaning and truth.

This is what a positive future for facilitation by people could be: The Guardians of Real Human Dialogue.

Facilitation is holding space for people to explore ideas and relationships. CEDS provides some sense of the kinds of spaces that are not simply about the process of facilitation but the depth of it.

What this misses

There is one final point tho. It’s not in the conversation with the AI but in the gap it makes.

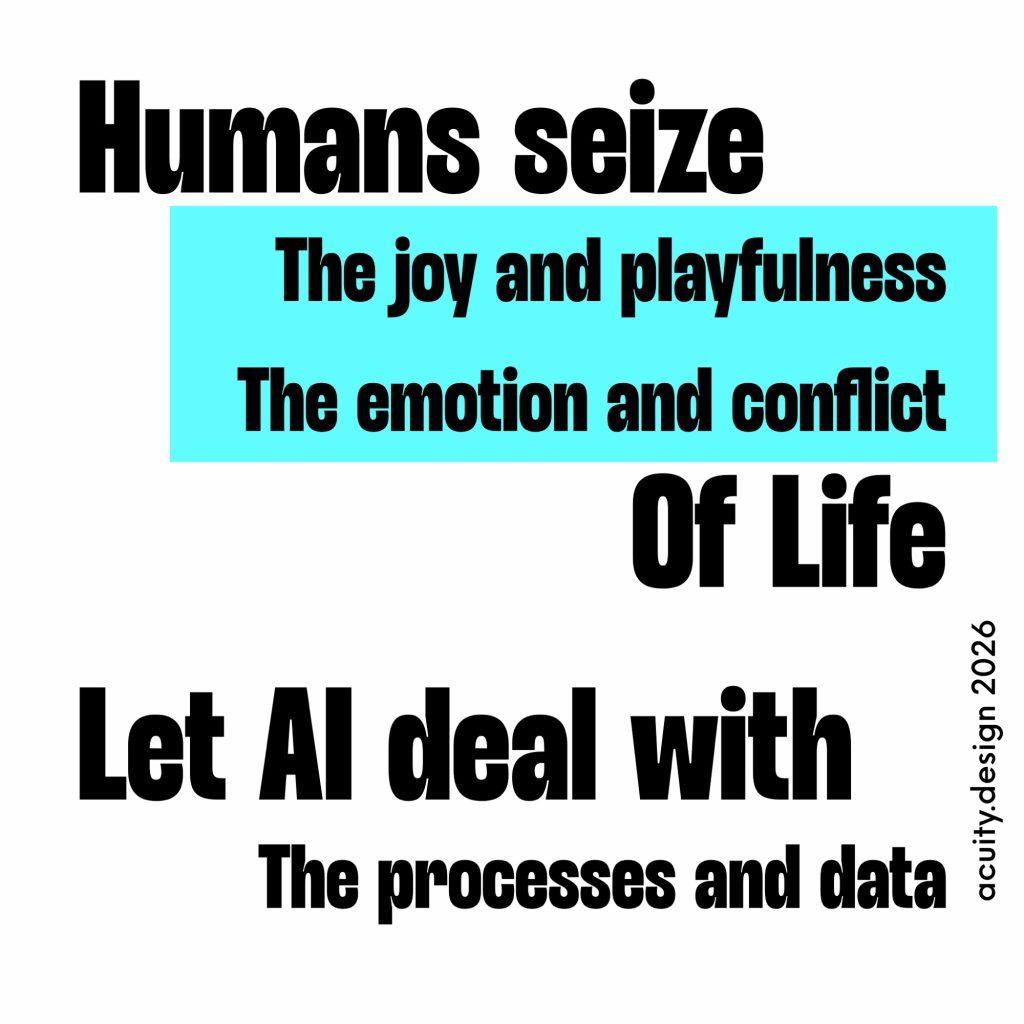

What concerns me here is the idea that human facilitation is only about hard and harsh parts of life.

The AI views human facilitation in terms of trauma, silence and conflict. It keeps to itself the ideas of ease and pleasure.

The last thing I’ll write is this: perhaps we do facilitate the emotion and conflict so as to maintain meaning and dialogue but we also seize joy and playfulness.

The idea of Cognitive Surrender is used to describe the kind of losses described earlier in this post (delegation, avoidance, mediation) but there is also a pre-surrender of letting AI define where it thinks where it has value-control.

Let AI have the processes and data work but leave the highs and lows of being human to us.

Thanks

Thanks to all the people who organised the Facilitate 2026 event, the people who joined in as participants and to the two people who designed and facilitated this session.